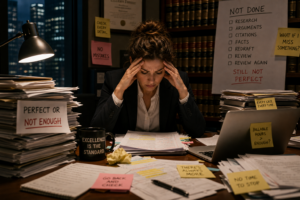

For more than a generation, the legal profession has been subjected to relentless criticism concerning the psychological costs of legal training and adversarial practice. Attorneys are often described as overly analytical, excessively skeptical, hyper-vigilant, emotionally defended, and cognitively predisposed toward conflict orientation. In therapeutic and interpersonal contexts, these traits may indeed create significant challenges. The same capacities that make a lawyer formidable in litigation can impair intimacy, increase chronic stress activation, reinforce perfectionistic tendencies, and contribute to burnout, relational dissatisfaction, and emotional exhaustion. The legal mind, particularly the trial-trained mind, often struggles to “turn off” the adversarial framework through which it has learned to interpret the world.

Yet the emergence of artificial intelligence presents an unexpected and deeply consequential reframing of these traits. The very cognitive architecture that can create friction in personal life may become extraordinarily adaptive in the AI era. As large language models increasingly permeate legal practice, business operations, medicine, finance, education, and public discourse, society is beginning to discover that effective interaction with artificial intelligence is not primarily a technological skill. It is an interrogatory skill. It is a reasoning skill. It is an issue-spotting skill. It is a verification skill. And these happen to be the precise competencies cultivated through years of litigation practice.

The popular conception of AI often imagines that technological dominance will belong primarily to engineers, coders, or younger digital natives. While technical expertise unquestionably matters, this framing overlooks a more foundational reality: artificial intelligence systems are linguistic systems. They operate through probabilistic language generation. The quality of output is heavily dependent upon the quality of inquiry. Put differently, AI systems are often only as effective as the person questioning them. This reality places experienced litigators in an unusually advantageous position because trial attorneys are, at their core, professional interrogators of information.

The deposition process provides perhaps the clearest parallel. Effective deposition questioning and effective AI prompting share a remarkably similar cognitive structure. A skilled litigator does not simply ask a witness for conclusions. The attorney first lays foundation, establishes definitions, narrows ambiguity, anticipates evasiveness, and incrementally constrains the range of acceptable responses. The litigator understands instinctively that the framing of the question often determines the quality and reliability of the answer.

This dynamic is structurally identical to sophisticated AI interaction. Novice AI users frequently pose broad, underdeveloped prompts and then become disappointed when the output is generic, inaccurate, or incomplete. The experienced litigator, by contrast, naturally approaches the model with disciplined inquiry. Rather than asking, “Analyze this contract,” the trial-trained attorney is more likely to ask the system to identify indemnification provisions that asymmetrically shift risk, evaluate whether those provisions conflict with limitation-of-liability clauses, isolate carve-outs that may undermine enforceability under California law, and assess how courts have interpreted analogous language in comparable contexts. This is not merely better prompting. It is the direct application of litigation cognition to machine interaction.

The similarities deepen further when one examines how litigators respond to ambiguity and evasion. During a deposition, experienced trial counsel quickly detects when a witness is being non-responsive, hedging, overstating certainty, or subtly modifying prior testimony. Trial attorneys are trained to hear qualification language with unusual precision. They recognize the significance of phrases such as “generally,” “to the best of my recollection,” “typically,” or “I believe.” They understand that uncertainty is often embedded within seemingly confident testimony.

Large language models exhibit remarkably analogous patterns. AI systems routinely generate responses that appear authoritative while quietly embedding uncertainty markers, assumptions, or probabilistic approximations. Less sophisticated users may overlook these subtleties entirely because the response “sounds right.” The trial-trained mind, however, has spent years learning not to confuse confidence with reliability. Litigators are conditioned to distinguish between genuine knowledge and plausible narrative construction. In the AI context, this becomes extraordinarily valuable because one of the defining risks of modern language models is confabulation — the generation of coherent but fabricated information.

Importantly, experienced litigators do not merely react to inaccurate testimony; they actively stress-test it. They probe for inconsistency. They revisit prior statements. They ask the same question from different angles. They identify gaps in chronology, unsupported assumptions, and unexplained leaps in logic. This adversarial verification process is deeply embedded in legal training. Attorneys are taught from the first year of law school never to accept assertions at face value. Every proposition must be sourced, challenged, contextualized, and reconciled against competing authority.

This disposition may become one of the single most valuable professional instincts in the AI era. The dangerous AI user is not necessarily the uninformed user, but the overly trusting one. Artificial intelligence systems are capable of producing responses that are elegant, articulate, and catastrophically wrong. The individuals most vulnerable to misuse are often those who lack disciplined skepticism and mistake fluency for accuracy. Attorneys, particularly litigators, possess unusually strong immunity against this specific failure mode because legal training systematically conditions practitioners to distrust unsupported conclusions until independently verified.

The connection between issue-spotting and prompt engineering is equally significant. One of the central intellectual tasks of legal education involves decomposing complex factual scenarios into discrete legal questions. Law students are trained to separate relevant from irrelevant facts, identify latent legal issues embedded within ambiguous narratives, and recognize how changing a single factual variable may alter the governing legal analysis. This mode of cognition mirrors the precise mental process required for sophisticated AI utilization.

The most effective AI users are rarely those asking broad conceptual questions. They are the individuals capable of breaking complex problems into component analytical tasks. Effective prompt engineering requires contextual framing, sequential reasoning, role assignment, specification of objectives, identification of constraints, and iterative refinement. These are not foreign processes to litigators. They are deeply familiar professional reflexes.

Indeed, the iterative nature of AI interaction closely resembles trial preparation itself. Trial attorneys rarely expect perfect testimony, perfect strategy, or perfect evidentiary rulings on the first pass. Litigation is inherently iterative. Attorneys refine theories as new facts emerge. They adjust questioning based upon responses received. They narrow arguments, test assumptions, and continuously recalibrate strategy in real time. Sophisticated AI use similarly depends upon iterative refinement rather than one-time inquiry. The attorney who understands how to progressively tighten factual assumptions and redirect inquiry based upon prior outputs may possess a substantial advantage over professionals who approach AI as a static search engine rather than a dynamic reasoning partner.

There is also a deeper psychological reason why litigators may adapt particularly well to AI integration. Trial work requires comfort operating under conditions of uncertainty. Litigators routinely make strategic decisions with incomplete information, conflicting testimony, and evolving factual records. They are trained to tolerate ambiguity while continuing to function decisively. This becomes increasingly important in the AI era because artificial intelligence does not eliminate uncertainty; rather, it amplifies the volume and speed of information generation. The professional challenge shifts from information scarcity to information discernment. The central question is no longer whether information can be generated, but whether it can be trusted, contextualized, and strategically deployed.

The trial-trained mind is unusually well-equipped for this environment because litigation has always involved managing informational instability. Attorneys understand that every narrative is potentially incomplete, every source potentially biased, and every factual representation potentially vulnerable to impeachment. While this cognitive orientation may become exhausting when generalized indiscriminately to personal relationships and daily life, it becomes immensely adaptive when interacting with probabilistic AI systems.

This reframing is particularly important given the growing anxiety within the legal profession concerning artificial intelligence. Many attorneys fear that AI threatens to commoditize legal reasoning, automate substantive analysis, and diminish the value of legal expertise. Certain categories of legal work will undoubtedly become increasingly automated. But the emergence of AI may simultaneously elevate the importance of precisely those higher-order cognitive skills most developed through litigation practice: structured inquiry, adversarial testing, issue decomposition, evidentiary skepticism, and strategic reasoning under uncertainty.

The legal profession may therefore face an ironic reversal. For years, attorneys have been told that many of their core psychological adaptations are maladaptive remnants of adversarial conditioning. In some domains, this critique remains valid. Hypervigilance, compulsive analytical thinking, and chronic adversarial orientation can impose genuine psychological costs. Yet the AI era may reveal that these same traits possess substantial market and intellectual value when properly directed. The legal mind is not merely a pathology-producing instrument. It is also a highly specialized cognitive tool forged for environments requiring disciplined skepticism, precision questioning, and probabilistic reasoning.

Artificial intelligence happens to be such an environment.

This does not negate the emotional and psychological costs associated with legal training. Nor does it romanticize burnout, chronic stress, or relational impairment. The point is more nuanced. Human traits are context-dependent. The same sharpened edges that may wound attorneys interpersonally can create profound professional advantage in the appropriate setting. The arrival of artificial intelligence may represent one of the first major technological shifts in modern history that rewards, rather than diminishes, the uniquely adversarial architecture of the trial-trained mind.

In this respect, litigators may discover something unexpected in the AI era: the cognitive framework they spent decades developing was not becoming obsolete. It was preparing them for a world in which the central professional skill is no longer merely possessing information, but knowing precisely how to interrogate it.